Part 1: How to Run a "Gold Standard" Interview Scheduling Tool RFP

Part 1: How to Run a "Gold Standard" Interview Scheduling Tool RFP

We've navigated hundreds of RFPs with TA teams at Guide. Companies ranging from 100-person startups to 10,000-person enterprises, all asking some version of the same questions: Which scheduling tool is actually worth buying? Which tool will actually work for our teams' nuanced needs?

Some of those RFPs have been incredibly well-run. Most haven't.

After going through this many times from the vendor side of the table, a few patterns become obvious quickly. The gap between a good RFP and a bad one can determine whether the tool you pick is the right one for your team — or quietly becomes shelfware within six months.

In this post, I'll walk through what many TA teams get wrong in their scheduling tool evaluation process — and then walk through one of the strongest RFP processes we've seen to date.

Where Most RFPs Go Wrong

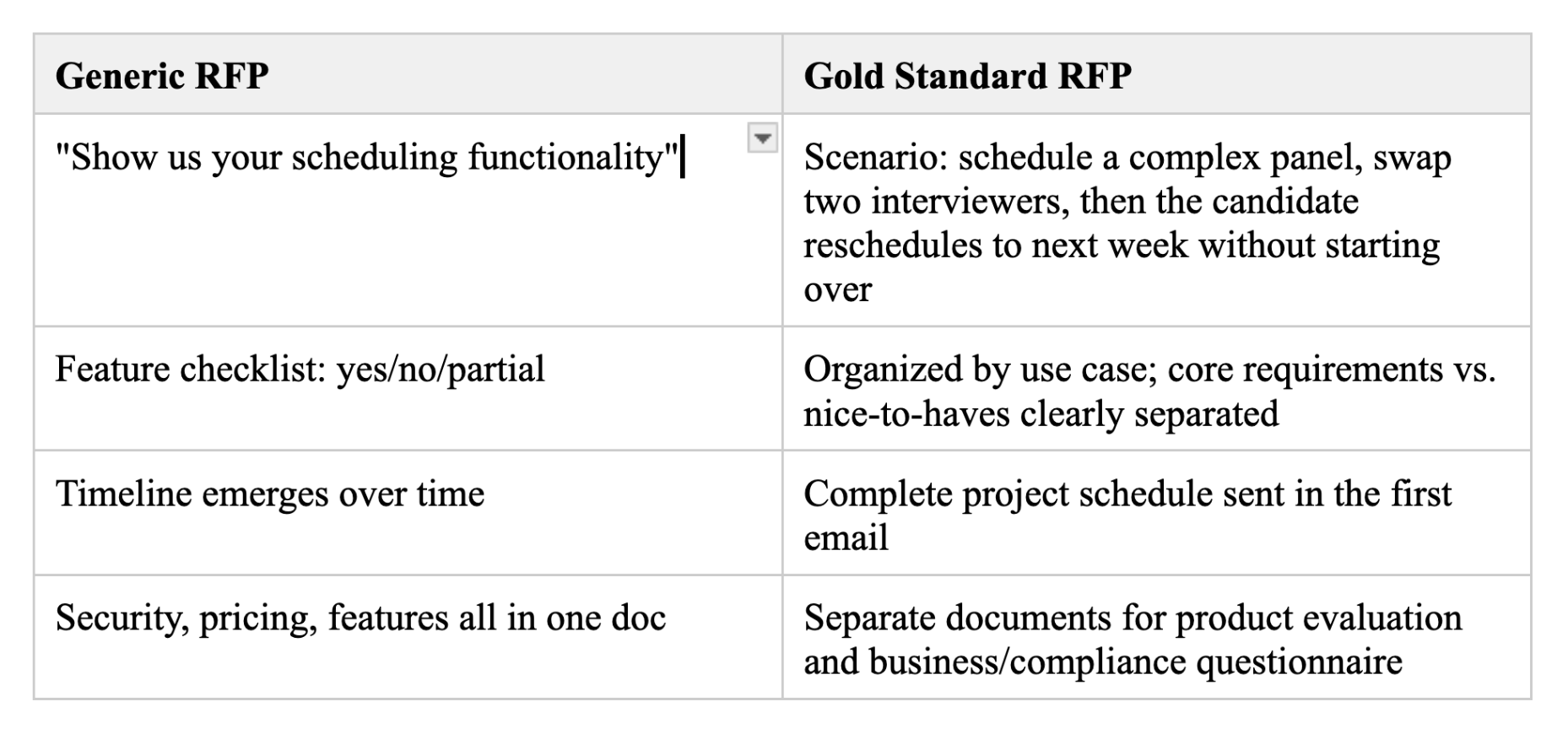

1. The feature checklist optimizes for the wrong things

The classic RFP format is a massive spreadsheet of features. The problem isn't the format. It's what the format rewards.

Vendors learn fast to say yes to everything. Buyers rarely dig deep enough to understand what "yes" actually means — how many clicks a workflow takes, whether a feature works the way their team uses it versus how the demo environment is configured. The spreadsheet creates the illusion of rigor without delivering it.

The format also has a structural bias problem: requirements lists are almost always built from pain with the current tool. "We need X because our current ATS can't do X." That's understandable. But it means you're evaluating vendors against what you already know is possible — not against what's actually possible. At Guide, we've seen teams get halfway through a demo cycle and discover a vendor has an AI agent that automates their entire scheduling workflow. It wasn't in their requirements because they didn't know to ask for it. You can't build requirements around solutions you don't yet know exist.

And then there's the thing nobody wants to say out loud: at the end of the day, the decision gets made by a few people whose opinions are based on how a handful of live demos go. The spreadsheet is a formality. The real decision happens in the room. When your formal process and your real process are running in parallel and never actually connecting, you tend to get bad outcomes. This can mean a good demo wins when the tool isn't the best fit, or that you miss the right tool when the demo isn't dialed to your needs.

2. There's no timeline, so the process drags out

Most companies treat the timeline as something that emerges over the course of the process. There's no schedule when the RFP goes out, deadlines shift, demos get rescheduled, key stakeholders drop in and out. The whole thing drags for months.

The result isn't just inefficiency. It's that the process loses momentum and frequently dies without a decision. Everyone moves on. The problem that prompted the RFP doesn't go away — it just doesn't get solved.

From the vendor side, a process with no defined timeline is also a signal. It tells us the buyer isn't sure they're serious yet, or doesn't have buy-in from their internal stakeholders. We allocate resources accordingly.

3. Lack of context = generic demos

The demo is where most RFPs live or die. It's where the real decision gets made — and it's where the process breaks down most visibly.

The worst setups we see share nothing with vendors upfront. No goals. No pain points. No context about how the team actually works. They just want to "see the product in action." We're expected to walk in cold and run a polished walkthrough without knowing what actually matters to the people in the room.

So that's exactly what we do. We show the happy path. The clean scenario where everything works. And it looks great — because it's designed to look great.

What you don't see is how the tool handles the messy stuff. The candidate who needs to move between reqs mid-process. The interviewer with a private calendar. The last-minute swap two hours before an onsite. Those scenarios don't make it into generic demos. They're also exactly the scenarios that break tools post-purchase.

The result is a process where both sides are performing. Vendors optimize for impressiveness. Buyers try to evaluate something real from a demo that was never designed to show them anything real. Everyone leaves feeling good. Nobody learned much.

4. The formal process and the actual decision are disconnected

Even with a thorough RFP, the final call usually comes down to gut feel. How did key stakeholders react in the demo? Does this feel like the right partner?

That's not necessarily bad. Experience-based instinct matters. But when the formal evaluation and the real evaluation are running separately and never informing each other, you get a specific failure mode: Vendor A scores highest on the scorecard. The team "felt better" about Vendor B in the demo. Now the decision gets made on vibes anyway — except you also wasted everyone's time on a process that didn't inform it.

The issue isn't that people trust their gut. It's that the RFP wasn't designed to capture what actually matters to the decision-makers.

What a Gold Standard RFP Looks Like

Below I walk through one of the strongest RFP processes we've been a part of at Guide. This was run by a large, well-known enterprise company. What made it stand out wasn't that it was more rigorous or had more questions. Every element was clearly designed to get to a real decision — not to check a box.

What I learned from them about choosing a scheduling tool:

- Set the timeline before anything goes out. Assign a DRI. Define when vendors receive the RFP, when questions are due, when demos happen, when you decide. Send that schedule in the first email. It forces your process to have a spine — and signals to vendors that you're serious.

- Write requirements that reflect real pain. The more specific, the better. Vague requirements let vendors say yes to everything and tell you nothing. And treat them as a living document — plan to update them after the first round of demos before you go into the final round.

- Make vendors demo the hard stuff. Build scenarios around your actual workflows and share them in advance. The messy situations, the cascading changes, the edge cases — those are what break tools in production. What you learn in 20 minutes of scenario-based demos beats two hours of a polished walkthrough.

- Design your process around how you'll actually decide. Define criteria upfront. Get the right people in the room. Collect structured feedback while impressions are fresh. If the real decision happens based on how key stakeholders feel in the demo, build a process that captures that. Don't run a formal evaluation in parallel that no one ends up using.

Now let's zoom into exactly what made their process run so effectively.

A defined timeline shared before kickoff

The first thing we received wasn't a list of requirements. It was an invitation email with a complete project schedule attached — with specific dates and deadlines for every stage of the process, and named contacts for the business lead, project manager, and procurement lead.

As a vendor, you receive that and immediately think: these people know what they're doing. You know exactly what's expected of you and when. You can actually allocate resources and prepare properly.

This sounds like basic project management — because it is. But I cannot overstate how rare it actually is. Most companies treat the timeline as something that emerges over the course of the process. The result is an RFP that drags for months, loses momentum, and frequently dies without a decision.

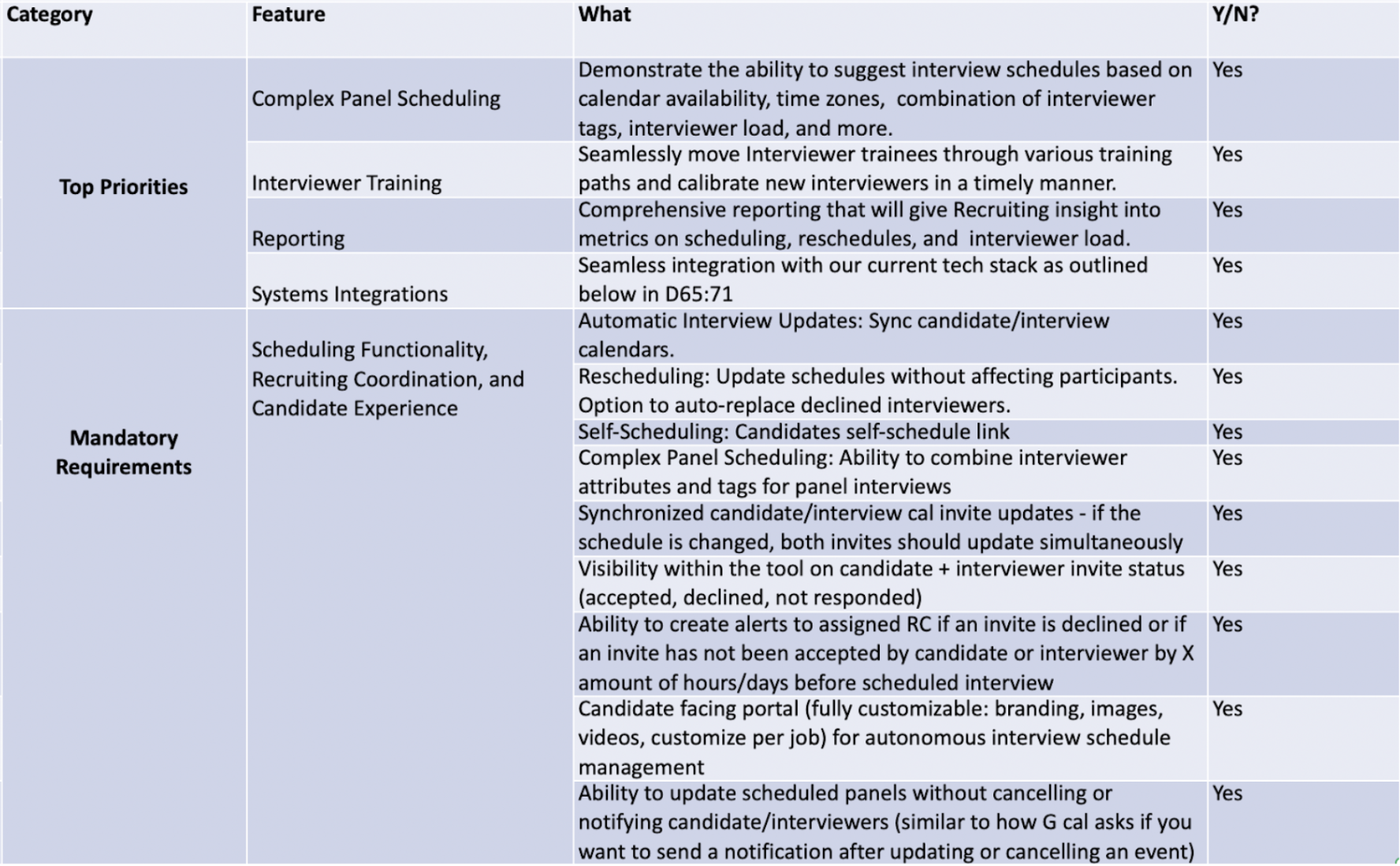

Specific requirements revealed the true pain points

Not a feature checklist. Organized by use case and workflow, with core requirements and nice-to-haves clearly separated.

But what really stood out was the specificity. Compare these two ways of asking for the same thing:

Generic version (what most RFPs say):

- Rescheduling functionality

- Calendar management

- Candidate communication tools

The company's version (what they actually wrote):

- "Ability to update scheduled panels without cancelling or notifying candidate/interviewers" (similar to how Google Calendar asks if you want to send a notification when updating an event)

- "Control over who receives an interview calendar invite and when (i.e. a candidate does not receive invite until interviewer accepts)"

- "Ability to reschedule a single event within a complex loop interview without needing to reschedule the entire loop"

- "Ability to create alerts to assigned RC if an invite is declined or if an invite has not been accepted by candidate or interviewer by X hours/days before scheduled interview"

Nobody writes requirements like that unless they've been burned by the absence of those things. The specificity tells you everything about the real pain they're trying to solve. That's what makes it useful.

That said: no requirements doc, no matter how good, will ever be complete. You're going to discover things you didn't know to ask for the moment you start seeing demos. A vendor might show you something that makes your requirements list feel five years old.

The best RFP processes treat requirements as a starting point, not a locked spec. Build in a checkpoint after the first round of demos to revisit what you've seen and update your criteria before going into the final round. The process should get sharper as you learn — not stay frozen at whatever you knew on day one.

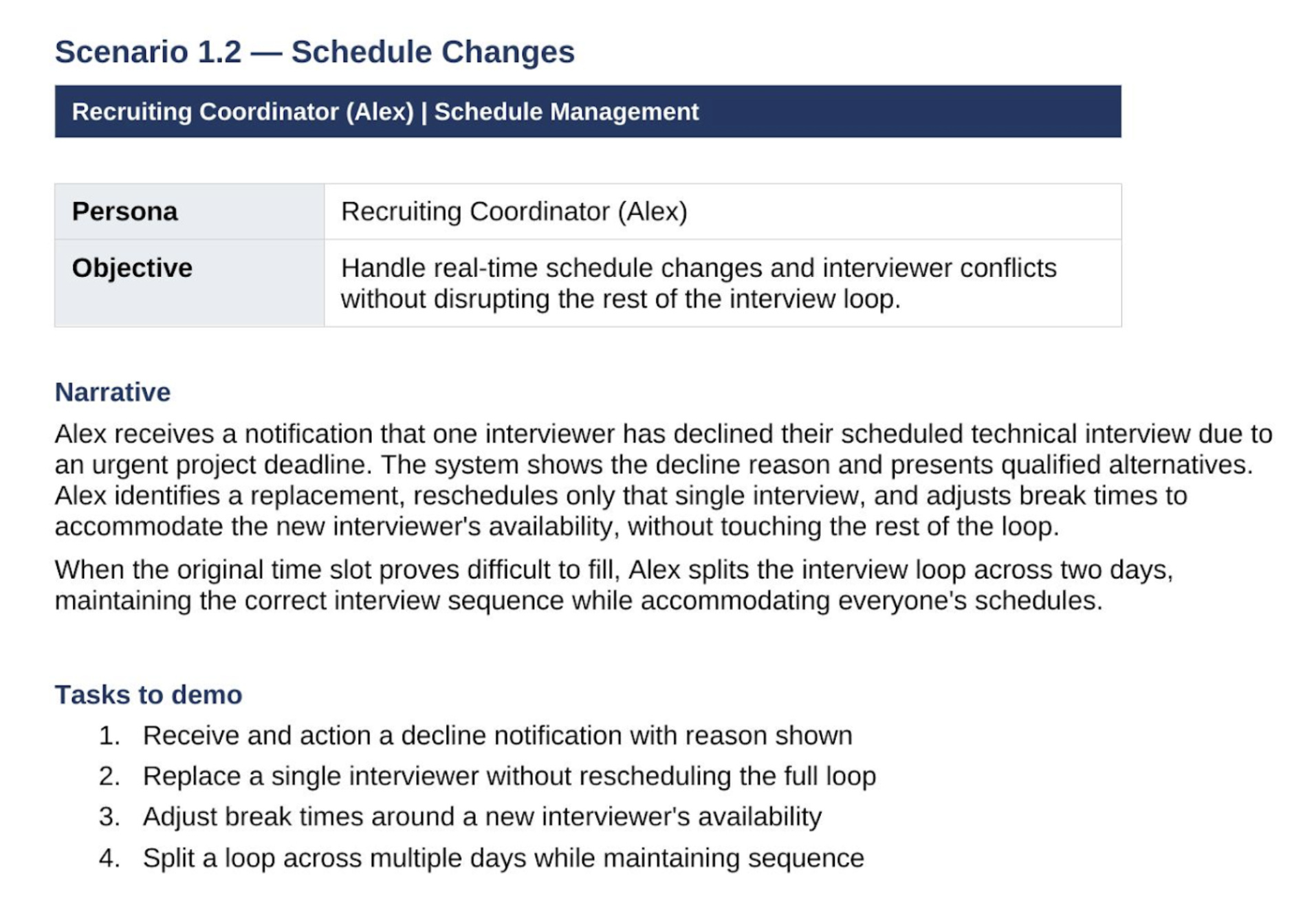

Pre-drafted, persona-specific case studies drove the demo agenda

This company sent us a PDF with specific scheduling scenarios that they wanted us to demo for them — ahead of time, so our sales team could prepare the demo to show them exactly what they needed to see. A few examples:

These are real scenarios. They represent everything from the most basic use cases to more complex situations with cascading changes and edge cases that show up every week for a busy RC team at a company with real hiring volume. It's also exactly the kind of scenario that generic demos are designed to avoid.

Compare that to a typical demo request: "We'd like to see your scheduling functionality, reporting and how candidates and interviewers interact with the platform."

That gives the vendor a free pass to show you their most polished happy path and nothing else. You learn almost nothing useful.

A process designed around how they'd actually decide

Two structured rounds of presentations with different stakeholders — TA team first, then legal, privacy and compliance. A named DRI driving every stage. Clear criteria defined before demos started, not after.

The result: by the time they got to a decision, the formal evaluation and the gut feel in the room were pointing at the same thing. There was no "the scorecard says one thing but the team feels differently" problem — because the process had been designed from the start to surface what actually mattered to the people making the call.

The Bottom Line

Most RFPs don't fail because someone asked the wrong questions. They fail because the process around them was broken from the start — no timeline, no real scenarios, no connection between the formal evaluation and the actual decision.

In Part 2, we'll get into what happens after you select a vendor — specifically, what the best companies do during implementation to make sure the tool actually gets adopted instead of quietly becoming shelfware.

Subscribe to our updates

Get notified when we publish new posts.

By clicking “Subscribe”, you agree to our Privacy Policy